AB Test Significance, Debunked

What do your test results mean? Enter your data to calculate your AB test's significance

Enter the data from your “A” and “B” pages into the AB test calculator to see if your results have reached statistical significance. Most AB testing experts use a significance level of 95%, which means that 19 times out of 20, your results will not be due to chance.

A/B Test Significance Calculator

Traffic

Conversion

Traffic

Conversion

CALCULATE

Conversion Rate

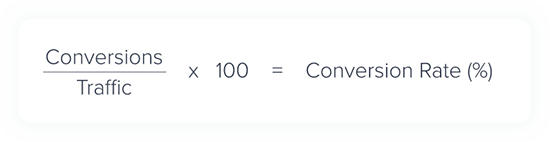

This is the number of conversions you expect to get for every visitor on your page. It is given as a percentage and calculated like this:

AB testing is the best way to make sure you increase your conversion rate in the long run.

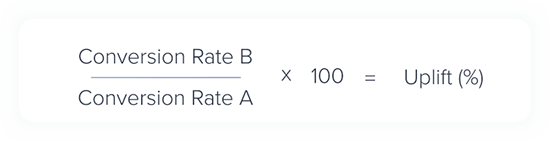

Uplift

Uplift is the relative increase in conversion rate between page A and page B. It is possible to have negative uplift if your original page is more effective than the new one. It is calculated like this:

It is important to remember that this is an increase in the rate conversions, not in absolute sales.

AB Test Significance

Within AB testing statistics, your results are considered “significant” when they are very unlikely to have occurred by chance. Achieving statistical significance with a 95% Confidence Level means you know that your results will only occur by chance once in every 20 times.

P-Value

Your P-Value is the probability that your results have occurred as a result of random chance. If this number is lower than your “Alpha” value (which is just 100 minus your Confidence Level) then your results are significant.

A high P-Value means your results are not significant. It could be due to your sample size, the size of your Uplift, or the way your data is scattered.

To increase the significance of your AB tests, you need to do one of three things:

- Increase your sample size

- Create a larger Uplift

- Produce more consistent data (with less variance)

In practical terms, that means there are a few things you can do…

Practical Steps To Increase Your Sample Size

1) Run your tests for longer, risking the chance that your data will be polluted. Most browsers delete any cookies within one month (some delete them after two weeks). Since AB testing tools use cookies to sort your visitors into group A and group B, long tests run the risk of cross-sampling.

2) Direct more of your traffic to your test pages. You can do that by including a link from the homepage, using your test as a landing page or by adjusting the order of your pages. Unfortunately, this also runs the risk of creating a bias, since your traffic will be made up of different kinds of people (searching things).

Practical Steps To Create a Larger Uplift

1) Try a more significant variation. Button colours, CTA text and titles can make a big impact, but only in some cases. Other times, more substantial edits are needed to create an effect. Your variations are most likely to create an Uplift when they communicate your offer or your value in a new way.

2) One of the most successful variations to try is a version of your webpage with persuasive notifications installed. These grab your visitors’ attention and can be used to create psychological effects such as Social Proof, FOMO and Urgency.

3) Another way to create Uplift is to reduce Friction on your page. You can do this by removing the number of fields in a form, adding helpful text or visual cues, or with Friction notifications.

How to produce more consistent data

Particular dates can have an unpredictable effect on your conversion rate. If you combine an event like Black Friday with a Multi-Armed Bandit, your tests could produce increased variance. Try running your test on specific days, or specific dates, to exclude anomalies.

It’s tempting to rush in and edit your website as soon as your test reaches significance. Here’s why you shouldn’t…

- Statistical significance does not mean practical importance. There may be other things you should change first.

- Even AB test with statistical significance can still be false positives. It’s best to change things gradually.

- Optimising one part of your website can have a negative effect on another part, and optimising for one metric (conversions) may have a negative effect on another metric (return customers).

So, the best idea is to change things slowly and keep an eye on all of the KPIs for your whole website. You should also take care to avoid any of the classic mistakes associated with AB test significance.

Classic Errors with AB Test Significance

- Thinking significance is the likelihood that your B page is better than your A page. In fact, it is only the probability that your results are not due to chance.

- Thinking a significant result “proves” that one approach is better than another. You can’t prove such a generalised hypothesis with AB testing. Instead, you can show that:

There is a significant probability that an Uplift occurred during your test, with your given sample and in the context of your website.

- Thinking that your customers “preferred” the B version of your page. All you are measuring is the impact your changes have on your customers’ behaviour – not how it affects their perceptions.